From Floppy Disks to the Modern Kernel: A Technical Retrospective

1. The MINIX Foundation and Microkernel Theory

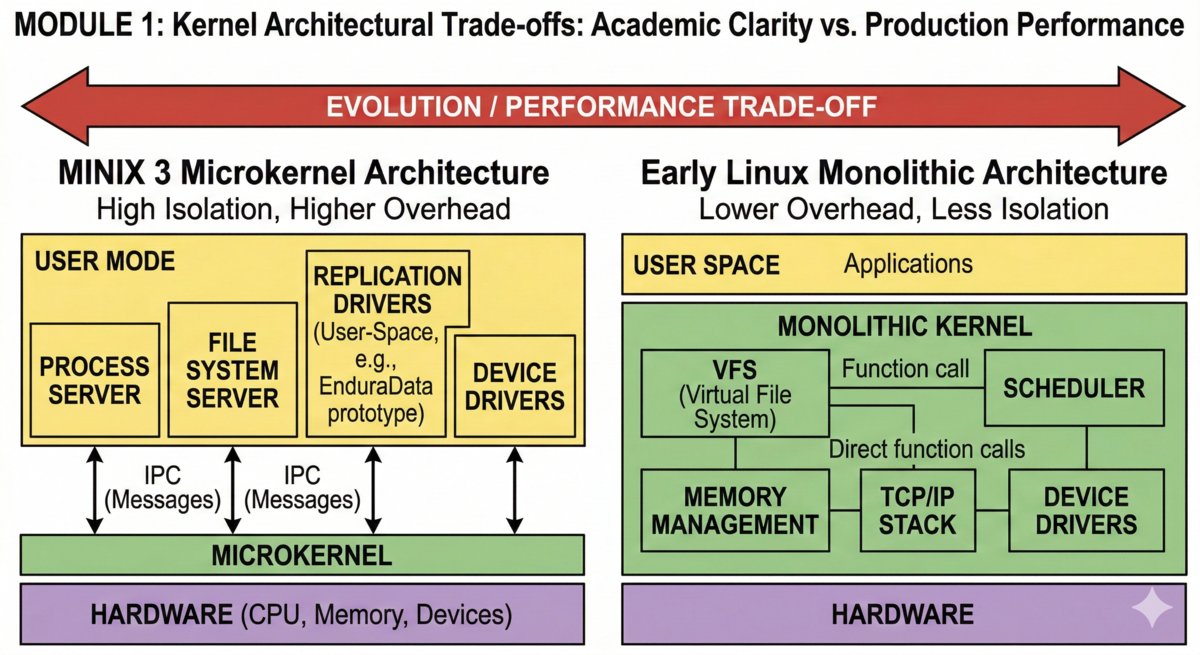

MINIX, created by Andrew Tanenbaum, was designed as a teaching tool. Its primary goal was code clarity and architectural “correctness” rather than raw performance.

- Microkernel Architecture: Unlike the monolithic kernels that followed, MINIX moved most OS services—such as file systems and device drivers—into user space.

- Isolation and Reliability: This design meant that a crash in a printer driver wouldn’t necessarily bring down the entire system. Communication happened via IPC (Inter-Process Communication), a concept that remains central to modern high-availability systems.

- The Constraint: In the late 1980s, running this on an XT clone required significant patience. The limited instruction set of the 8088/8086 processors meant that every system call had to be lean, and the lack of a Memory Management Unit (MMU) made true memory protection a challenge.

Visualizing the fundamental trade-off: The modularity of the MINIX microkernel versus the integrated performance of the monolithic Linux kernel.

2. The Monolithic Shift: Performance over Purity

When Linus Torvalds began development, he opted for a monolithic kernel. In this architecture, the entire operating system—including the Virtual File System (VFS), device drivers, and memory management—runs within the same kernel address space.

- The 386 Influence: Unlike the XT, the Intel 386 provided Protected Mode and a formal MMU. This allowed the early Linux kernel to implement true multitasking and demand paging.

- The Performance Win: While the microkernel is theoretically more “elegant,” the monolithic approach reduced the overhead of context switching between the kernel and user services. On early 90s hardware, this was a massive advantage for system throughput.

3. The “Baptism by Fire”: Early Configuration Challenges

Deploying early Linux wasn’t a matter of clicking “Install.” It was an exercise in hardware-level troubleshooting.

The Kernel Rebuild

Before kernels were modular, drivers were compiled directly into the binary. To support a specific network card (like a 3C509) or a SCSI controller, you had to navigate to /usr/src/linux, run make config (a grueling linear text prompt), and then make zImage. A single mistake, such as forgetting a file system driver, resulted in a Kernel Panic on the next boot.

XFree86 and the “Monitor Killer”

Configuring the graphical interface (X11) was high-stakes. You had to manually input the exact horizontal and vertical synchronization ranges into the XF86Config file.

The Danger: Entering a Modeline with a refresh rate higher than your CRT monitor’s physical limit could cause hardware failure. The administrator was the only thing standing between a working desktop and a smoking monitor.

4. Distribution Philosophies: Slackware vs. Red Hat

By the mid-90s, the challenge shifted from writing the kernel to managing the userland. Two distinct paths emerged:

- Slackware (The Transparent Purist): Relied on simple

.tgzpackages. It had no concept of automatic dependency resolution. This forced administrators to learn exactly where every binary and library lived in the filesystem hierarchy. - Red Hat (The Birth of Abstraction): Introduced the RPM (Red Hat Package Manager). It featured a metadata database that tracked dependencies. While it couldn’t automatically fetch missing files in the pre-YUM era, it provided the first structured way to ensure system consistency.

5. Technical Takeaway

Understanding these roots is essential for modern systems work. Whether you are managing an OpenBSD server or optimizing Linux for enterprise-grade data replication, you are navigating the same trade-offs established thirty years ago:

- Architecture: Where does the service live (Kernel vs. User space)?

- Resource Constraints: How does the software respect physical hardware limits?

- Abstraction: How much system complexity is hidden from the operator?

See also:

https://www.enduradata.com/attunity-alternative-replacement

https://www.enduradata.com/repliweb-attunity-alternative-linux-migration

https://www.enduradata.com/online-backup-data-protection-using-edpcloud

Share this Post